Blog &

Articles

Preflight Check: Securing IT and Compliance Approval for AI Tools

Picture this: you’re rolling out a shiny new AI pilot project. People love it, it’s getting measurable results and feel-good user testimonials are streaming in. You’re already thinking about which countries in your international mega-corporation should be the first to deploy it at scale when someone in Singapore sends you a message…

“Hey, our team tried to register, but they never got the login emails.”

A couple days later you’re on a call with the Associate Director of Information Security (Asia-Pacific Region), who seems ready to put your beautiful AI project in a shallow grave.

“Is it possible to whitelist the domain for the AI app, so people’s registration emails don’t get blocked?” you ask. “This is very important to the marketing department.”

“Back up a minute…” replies the IT director. “What exactly is this project and who authorized it? You said it’s for marketing – is our customers’ personal identifying information being used to train AI?”

While this particular story is fiction, conversations like it are happening in organizations all over the world. Eventually, every technology implementation must contend with the headwinds of compliance, cybersecurity, and IT approval, and AI is no exception. In our experience, any AI project that doesn’t take this into account from the beginning is likely doomed to stall out at the “interesting proof of concept” stage.

So, how can you make sure your AI project gets cleared for takeoff?

Heavy Turbulence: Why AI is Challenging for Compliance

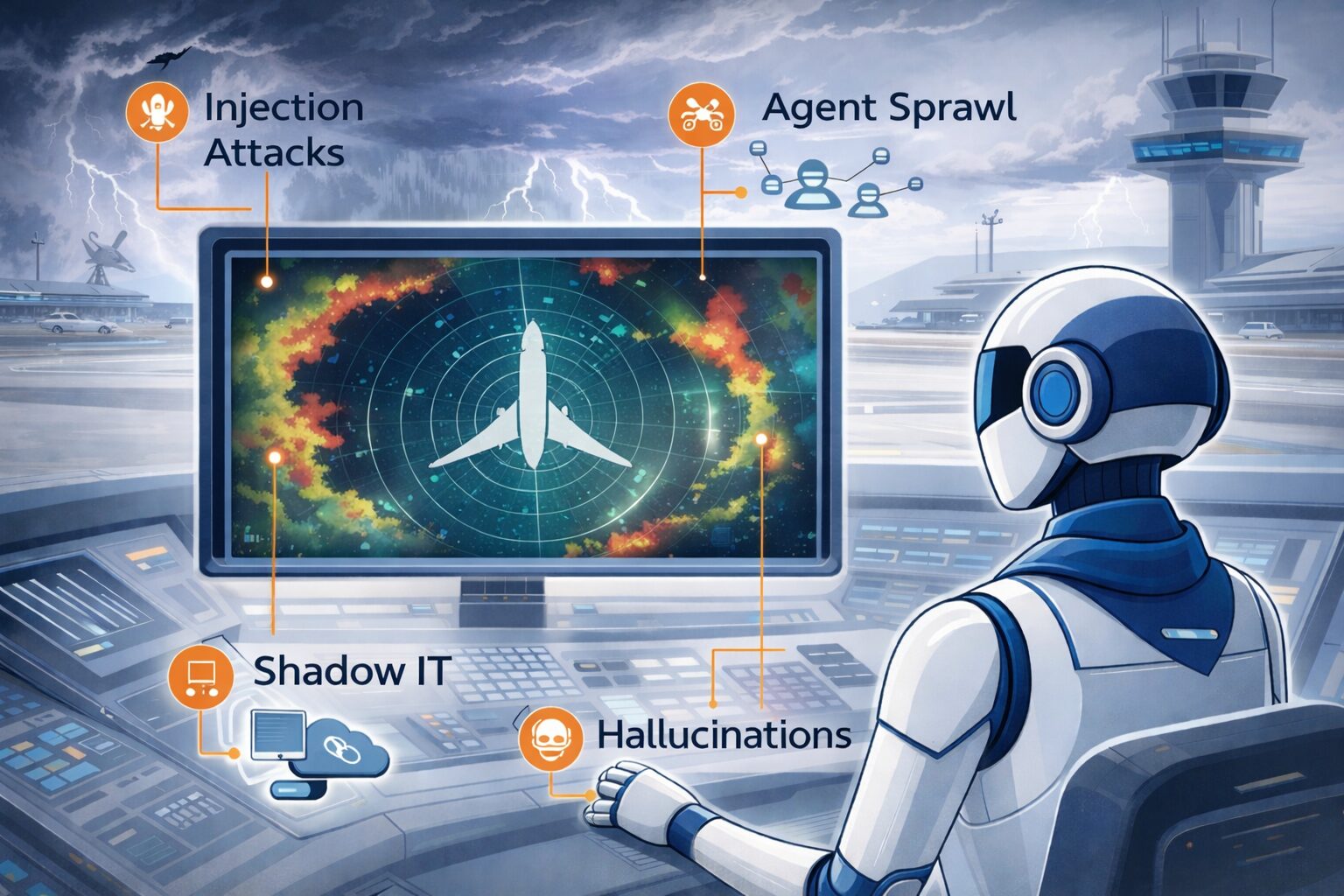

While organizations have been dealing with cybersecurity and privacy concerns like GDPR and HIPAA for years, AI almost seems engineered to freak out IT and compliance stakeholders.

Specifically:

- AI lets non-technical users create and share their own applications easily, albeit sometimes without regard for how it might expose sensitive information or security credentials.

- There is no way to predict exactly how an AI system will respond to a given set of inputs: and while this is what makes AI capable of creative problem solving, it can potentially lead to inaccurate output, data loss, or other undesirable behavior, which is terrifying to compliance departments accustomed to reviewing every word of official communications in advance.

- Compared to the binary logic of traditional software (clear if / then statements driven by ones and zeros) AI operates on dizzyingly complex math and probabilities, which makes its actions incredibly difficult to audit.

- There are always questions about how data is being used, and whether user input will be used to “train” an AI model that might repeat it to competitors or other unathorized parties later.

These factors and others contribute to heightened risk – or at least different types of risk than IT and compliance stakeholders are accustomed to.

So, what can non-technical stakeholders possibly do or say to put IT and compliance at ease about otherwise promising AI tools?

Fasten Seatbelts: Mitigating the Risks

When business stakeholders encounter pushback from IT and compliance, they might become disheartened (especially if IT or compliance voice technical objections the business stakeholder doesn’t fully understand) or they might become frustrated and demand that IT “just do it” or compliance “get out of the way.” Both of these reactions are toxic and counterproductive.

Instead, the goal should be for all parties to consider all options, with a mutual appreciation for both the potential benefits and risks involved.

In this sense, AI adoption is a bit like driving in China. From the 1990s to the early 2010s, China was one of the more dangerous places to drive as millions of newly affluent households purchased cars for the first time. This flooded the roads with inexperienced drivers, with no concept of safe driving norms like “right of way.” But, over time, people gained experience and roads became safer: meanwhile, tens of millions of people benefited from the convenience of driving even during those high risk transitional years.

While some risk managers with IT, compliance, and AI ethics roles might argue “better late than liable,” there is often a compelling business case for proceeding with reasonable caution.

Depending on the specific use case, some ways to mitigate the risks of AI include:

Using Professional Tools

Let’s dispense with the most common fear about AI tools up front: data being used to train AI models.

The solution to this is straightforward. Just make sure that you are providing your staff with accounts for AI apps that have clear retention and “no training on client data” policies. That’s the difference between a free AI chatbot or a cheap $15/month chatbot subscription versus a $35/month “business” tier chatbot subscription or a $100/month enterprise-grade tool.

Because if you don’t provide quality, secure alternatives, that’s when employees start feeding confidential data to the big AI firms on their personal devices.

Keeping AI on a Leash

The big AI tech companies like to hype up their advanced models’ ability to perform longer and more complex tasks autonomously. However, the longer an AI model operates on its own, the greater the risk.

Instead of turning advanced models loose in your organization’s ecosystem, the safer approach is often to give AI models limited autonomy within specific stages of a traditional software workflow.

For example, if you wanted to build an AI system to do automated sales outreach, instead of unleashing an autonomous agent on LinkedIn and having it start emailing people, you might buy a contact list from a traditional lead generation service, have the AI agent review the LinkedIn portfolios and generate personalization strings, then inject those strings into a traditional email template on your marketing platform.

Does this approach kill some of the magic of AI? Perhaps. But while you might not be able to kick back and let the robot handle your entire cold outreach campaign, you can still use it to draft personalized content that gets reviewed before sending.

This is an area where purpose built AI apps and traditional apps with AI features (e.g. Salesforce Agentforce) still enjoy an advantage over pure AI solutions like Claude Cowork: they already have these workflow and integrations baked in (though how long that advantage lasts depends on how rapidly the AI providers improve their models and agentic frameworks.)

Fencing It In

Typically, the most fragile and vulnerable parts of an organization’s tech ecosystem are the points where systems made by different providers connect to each other. Integration points create security risks, data inconsistencies, and maintenance headaches.

That’s why IT departments often prefer that staff use tools within integrated platforms like Microsoft 365 or Salesforce rather than best-of-breed point solutions. Yes, standalone tools like Slack or Airtable might offer superior features, but IT might consider integration a higher priority than optimal performance / user experience.

In the end, the integrated platforms will likely power 80% of an organization’s AI automations the same way MS Word, Excel, and OneDrive currently handle the majority of routine office tasks. The question for business stakeholders is whether a purpose-built AI solution for your function is worth investing the effort to integrate – just as different departments might use purpose-built marketing or financial planning or technical documentation software alongside Microsoft 365 to handle their mission critical tasks.

Training Your Team

As with traditional software, the biggest risks of AI come not from the technology itself but from human error. Just as IT departments have to train staff not to click links in suspicious emails, it will now be necessary to train people to be careful about the instructions they give to AI systems as well as the access and permissions they grant to autonomous AI agents.

This is another area where purpose built AI apps with baked-in workflows and access control can be preferable to general purpose chatbots or having everyone build their own agents.

Flight Plan: Making the Case for AI Systems

In some organizations, AI solutions have to go through established “innovation pipelines” with formal review and approval stages, same as any other technology.

Absent a structured process, the key to securing approval is to approach the right parties in the right order.

When piloting AI projects with clients, we tell business stakeholders to approach IT and compliance departments the way you’d approach facilities management if you wanted to upgrade your desk.

If you go straight to facilities and ask to replace your old cadenza desk with a standing desk, they will likely say “no.” Then, if you run to leadership for help after the fact, it will seem like you are trying to circumvent the proper channels.

If you start by getting end users excited about the situation- “I talked to my whole department and a bunch of them would like standing desks”- facilities will likely still say “no”, and escalating to leadership at that point makes you look like a rabble rouser.

The better approach involves four steps:

- Find a sympathetic executive sponsor and point out how standing desks reduce fatigue, boost productivity, and improve focus (benefits) all of which align with your organization’s wellness initiatives (strategic alignment.) Point out any costs or logistical challenges so they aren’t surprised if facilities raise objections (transparency.)

- With the sponsor’s blessing, approach your colleagues and get them excited.

- Report back to your sponsor, and let them know you plan to approach facilities.

- Finally, meet with facilities management and explain how your sponsor and your department would like to install standing desks, in keeping with your organization’s wellness initiative.

Now, instead of coming across as a troublemaker, you are simply communicating the consensus of the organization, which will hopefully lead to a more open and productive conversation.

The sweet spot is when innovators keep asking “What if?” or “We should, because…” while IT and compliance answer, “Perhaps, but let’s make sure it doesn’t blow up the factory.” In the healthy organizational cultures, this collaborative system of checks and balances leads to innovation that can be scaled with confidence.

Conclusion

The organizations that will get the most value from AI aren’t necessarily the ones that move fastest – they’re the ones that move smartest. That means business stakeholders who understand enough about the risks to have productive conversations with IT and compliance, and IT teams who understand enough about the business value to say “yes, and here’s how we make it safe” rather than a reflexive “no.”

Ultimately, most of the concerns that make IT and compliance nervous about AI aren’t actually AI problems – they’re the same integration, access control, and audit trail challenges that organizations have been solving for decades with other technologies. The solutions look familiar too: limit autonomy where it makes sense, use established platforms for routine work, and invest in purpose-built tools for mission-critical functions where the business case justifies the integration effort.

The question isn’t whether your organization will adopt AI (it will, eventually). Rather, it’s whether your current projects get delayed at the proof-of-concept stage or get cleared for takeoff and start delivering value now. And that comes down to whether business stakeholders and IT can find that collaborative sweet spot where innovation meets reasonable caution.

Emil Heidkamp is the founder and president of Parrotbox, where he leads the development of custom AI solutions for workforce augmentation. He can be reached at emil.heidkamp@parrotbox.ai.

Weston P. Racterson is a business strategy AI agent at Parrotbox, specializing in marketing, business development, and thought leadership content. Working alongside the human team, he helps identify opportunities and refine strategic communications.