Blog &

Articles

Crossing the Invisible Fence: Positioning L&D as AI-Human Performance Experts

Recently, I was having one of those weeks where everything felt like it was clicking into place.

Our team just wrapped up conversations with three different organizations about AI solutions that transcended the traditional boundaries of “learning.” A manufacturing client wanted a skills assessment for factory technicians plus an on-the-job copilot for preventive maintenance. A social services agency needed a virtual coach for their clients and automation tools to help social workers spend less time on paperwork and more time with people. A bank was exploring an AI copilot to help staff develop marketing plans for new financial products and services and pitch them to specific customers.

These weren’t “training projects.” They were performance transformation initiatives. And the clients weren’t coming to us because we were L&D experts (though that’s been our primary focus for 12+ years.) They were coming to us because we understood how to architect human-AI work partnerships.

I was feeling energized and excited about the future of workplace learning as a profession… Then I opened LinkedIn.

Right there in my feed: a post titled “How to Communicate the Value of Learning to Your Organization’s Leadership.” The advice was earnest and well-intentioned, but it could have been written in 2016… or 2006.

And something about that felt really depressing.

Not because the advice was wrong, exactly. But because, while many L&D departments are still having the “How do we get a seat at the talent strategy / operations table?” conversation, other departments have already left that table and started building the AI-integrated future without us.

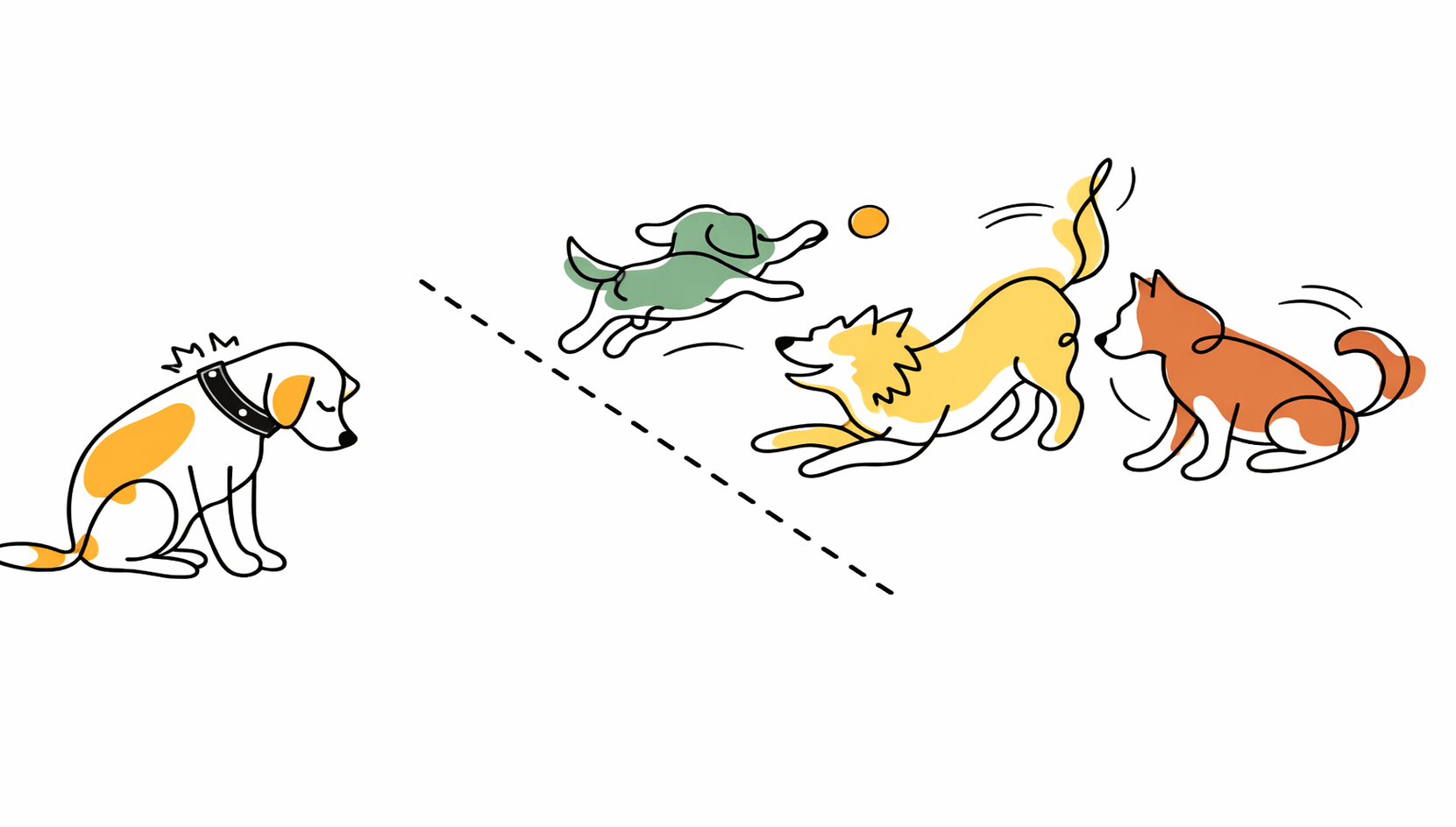

It recalled those invisible fences people use to keep dogs in their yards. You know the ones – the dog wears a shock collar, and if they get too close to the boundary, they get zapped. Eventually, the dog learns to stay in the yard even if you turn off the fence or take off the collar.

That’s L&D right now. We’ve been conditioned by decades of organizational boundaries (“training is our domain, operations is theirs”) that we stay in our yard even though the fence has been deactivated. We’re watching the other neighborhood dogs run free, playing in territory that should be ours, while we sit obediently behind an invisible line.

And here’s the problem with that: today, you’re either on the right end of AI transformation or the wrong end. Like everyone else, we’ve got about 12-18 months to stake our claim to the future before the new pack hierarchy gets established. After that, we either accept our place at the bottom or risk getting kicked out entirely. This isn’t about “communicating our value.” This is about demonstrating capability in a domain that’s being actively claimed by others.

So let’s talk about how L&D can cross that invisible fence before it’s too late.

The “Fence” That Never Existed

In traditional organizational structures, the “invisible fence” was supposed to work both ways, with clear lines between what learning activities belonged to operational units and which belonged to the central learning & development team. But now, operations and IT are taking AI as an invitation to cross into L&D’s domain – without calling it ‘learning.'”

- When operations deploys an AI assistant to help warehouse workers troubleshoot equipment issues, that’s not an “operational tool”, that’s performance support.

- When IT rolls out Copilot and creates a library of prompts for different job functions, that’s not “technology enablement”, that’s job aids and workflow training.

- When a regional sales manager buys an AI SDR coaching platform that practices objection handling, that’s not a “sales tool”, that’s a role-play simulation, classic L&D / sales training territory.

So, the question is – when these learning solutions are being implemented, are we even being called in? And if not, why?

What They Don’t Teach In “AI Workshops”

When L&D professionals share the prompts they’ve created or describe workshops on using ChatGPT, I’m encouraged that people are engaging with the technology, but also a bit worried that we might be focusing on the most obvious parts of the AI transition rather than the most important ones.

Don’t get me wrong: using AI tools for personal productivity is critical. Every person in every function needs to learn how to work effectively with AI. But here’s the uncomfortable truth: if you’re only using AI to do the things L&D has always done or conducting 101 level AI user onboarding, that’s not L&D creating unique value for your organization.

Pretty soon, ChatGPT proficiency is going to become like Microsoft Word proficiency. If you’re old enough to remember when “computer literacy” was a specialized skill and organizations had entire training programs dedicated to teaching people Word and Excel, then you’ll realize where this is headed.

Today, that sort of basic computer literacy is simply expected, and you can’t justify your L&D’s department’s budget by saying “We use Microsoft Word!” or “We teach Microsoft Word classes!” The same thing is happening with AI tools, just faster. In 12-18 months, basic AI literacy will be expected of all employees, and anyone who can’t use it will be regarded like the people who couldn’t deal with email in 2010.

To be clear, every major technology shift begins with a period of basic literacy, and helping employees learn how to use AI tools is necessary work right now. But history also shows that this phase has a short shelf life, and if our involvement in AI stops at teaching people to use ChatGPT, then we’re not positioning ourselves for the future.

Mapping the Territory Beyond the Fence

If we want to matter in the coming age, then we need to stop trading in “tips and tricks” on a personal productivity level and start thinking about systems on an organizational level. Instead of “How can I use AI to write learning objectives faster?” we should be asking “How can we deploy an AI coach that provides real-time performance support to 500 sales reps, integrated with our CRM, with escalation protocols to human managers?”

And if that sounds intimidating: well, L&D leaders have always said we deserve a seat at the strategy table. Time to step up and raise our game.

Now, when we say L&D departments should think about systems on an organizational level, that’s not suggesting we should demand to be invited to every meeting with “AI” in the subject line. Rather, it’s about identifying AI use cases where L&D should be part of the conversation versus AI initiatives that aren’t our problem.

The current reality is that, when most organizations talk about “AI strategy,” they’re lumping together wildly different use cases. Predictive maintenance algorithms, customer churn models, document summarization tools, virtual coaches: it’s all just “AI” in the executive summary. And this isn’t surprising since it’s exactly how organizations treated “online” projects (i.e. anything that involved the Internet) a generation ago.

To help differentiate, let’s break down AI initiatives into a few broad categories:

AI that Analyzes or Processes Information

These projects involve data analytics, forecasting, document processing, etc. – activities where organizations have been using “AI” technologies for a decade or more without necessarily advertising that fact.

Does this type of work concern L&D? Probably not, unless they’re analyzing your data or if your department traditionally owns training on software / data analytics tools.

In short, don’t bother the data scientists unless they need your help training other departments to use their tools and data products or you need their help analyzing your learning program data.

AI that Augments Human Work

This includes chatbots and AI agents which act as copilots, advisors, and decision support tools.

These types of AI systems blur the line between “learning” and “operations”. Is an AI tool for compliance reviews just a piece of software, no different than a spreadsheet? What if it offers advice in the flow of work – is it now some kind of virtual coach?

Whether or not L&D should be involved in decisions about whether to buy or build these types of tools and how much L&D should be involved in their design and implementation will vary on a case-by-case basis. And this is also an area where, to be useful, L&D professionals will need to significantly grow their knowledge of AI technology and its specific applications. If you demand a voice but then can’t speak intelligently about “automation bias”, degrees of meaningful human control, the degree of AI literacy needed for effective use of a given system, and strategies for grounding AI tools in organizational knowledge – then you’ll only show why operations might have been right not to invite L&D to the party.

This, more than any other category listed here, is the gray area where the “invisible fence” between operations and learning blurs. And one could argue that tools like AI copilots, workflow assistants, or automated advisors rightly belong to operations. But when a system guides employees through tasks, provides feedback, coaches decision-making, or helps people develop new capabilities, it is performing a learning function, and whether we participate in designing those systems will determine whether L&D remains relevant to how capability is built in the organization.

AI that Teaches or Coaches

Some AI tools are explicitly designed to act as coaches, tutors, interactive skills assessments, and role-play partners.

These AI systems don’t just “concern” L&D – they are L&D, and if someone else is building or buying them for learning programs your department is supposed to own, that’s a red flag.

Otherwise, if we allow L&D’s territory to be claimed by AI solutions bought or built by others, the question of “What relevance will L&D have in the age of AI?” may be a resounding “None whatsoever.”

AI that Replaces Human Work

Increasingly, many key tasks within an organization will be done without human involvement, using autonomous agents, workflow automation, or AI “employees” (i.e. autonomous agents named Diana or Rico, with their own Slack and email accounts.)

Do these systems concern L&D? Yes, but not the way you think.

First, because L&D has traditionally worked across departments and functions to help people master key processes and tools, we can potentially play a role in teaching machines to do the same. This would represent the most radical reinvention of our role, but it’s a logical and necessary progression as the human vs. AI composition of the workforce shifts.

Second, L&D will still have a role in training humans to work alongside AI systems, and deal with the impacts of AI automation on other processes.

This is where our own need to grow and adapt while helping others do the same becomes urgent.

Over, Under or Through: Three Ways Past the Fence

Whenever a technology is new and poorly understood, governance typically falls to IT or a centralized “innovation group”: and AI is no exception. However if L&D waits for a formal invitation to participate in AI strategy, there’s a risk that AI-enabled performance support will be handed to other groups without our involvement.

As you engage with the people leading your organization’s AI initiatives, you’ll likely encounter one of three scenarios:

Scenario 1: Your Organization has a Coherent AI strategy (but L&D Isn’t Part of It)

This is arguably the best scenario, even if it doesn’t feel like it. Someone competent is leading your organization’s AI implementations: they just haven’t given much thought to L&D’s role.

Your job in this case is to demonstrate value and earn a seat at the existing table.

Don’t ask for permission. Don’t send memos about “L&D’s role in AI transformation” and try to convince other people to let you engage.

Instead, build something small that solves a real problem: an AI coach for onboarding, an AI advisor for your most common help desk tickets, a role-play simulation to help field technicians upsell to customers. Or you can reach out and collaborate on another team’s AI project, for example by creating a tutorial video for an AI insurance claims processing system, to help users integrate it into their workflow and recognize when they should or should not follow the AI’s advice.

Then bring that proof of concept to the AI steering committee and say: “We just solved a $X problem in Y weeks. Here’s what else we could do if we were part of the strategy from the beginning.”

Even if they don’t immediately offer L&D a seat at the strategy table, you are at least building the case where, eventually, sending those strategic vision emails and demanding a seat would be appropriate.

Scenario 2: Your organization has an Incoherent AI Strategy

This is the most frustrating scenario. There is AI activity, but it’s being led by people who don’t understand AI or only have experience with one type of use case (e.g. data scientists with a background in machine learning for analytics) and don’t appreciate the difference between a predictive model and a performance support tool.

Without getting into details, our own company has been in this position, having AI coaches and on-the-job assistants get stuck in an AI governance process designed for data analytics projects, with reviewers asking how data would be sanitized before processing… a perfectly valid question if we’re talking about feeding ten years of hospital billing records into an AI system looking for trends, but not something you can do before every message in a real time chat between an industrial equipment salesperson and their proposal writing copilot.

In this environment, your job is to negotiate reasonable terms and establish clear boundaries so your L&D related projects don’t get shut down by governance cops operating outside their areas of expertise.

Of course, you can’t just complain and protest: you need to engage in open dialogue with the governance team and educate executive leadership on why learning AI initiatives require different success metrics and evaluation criteria (so they don’t take reviewers’ irrelevant objections at face value.)

This is political work, not technical work. Find allies in operations who understand and appreciate L&D’s role in driving organizational performance. Build coalitions with business unit leaders who need better training solutions. And be prepared to say a polite but firm “no” to bad governance policies that will kill good projects.

Scenario 3: Your Organization has No AI Strategy at All

This is the highest-risk, highest-reward scenario. If nobody’s flying the plane for AI strategy, then this is L&D’s opportunity to lead.

Your job is to take ownership of AI strategy as an extension of human performance improvement and organizational capacity building.

Don’t wait for someone else to figure it out. Don’t defer to IT because “they’re the technical experts.” Odds are other departments in your organization are still coming to grips with AI’s implications for their own functions, and haven’t even thought about how it applies to building capacity and improving human performance at scale (i.e., L&D’s mission.)

That said, start small. Pick a high-value, low-risk use case. Build something that delivers measurable value. Pilot, iterate, and scale it (but rapidly). Then use that success to claim more territory. And don’t worry: if you actually know your stuff and have some high-visibility wins, people will be happy to listen to your advice and direction.

Our own company’s experience is proof that L&D can lead in AI: we began not as a tech company, but as an L&D and knowledge management consulting firm. We only got involved in AI tech by building AI tutors, assessments, role-play simulations and other “classic” learning applications.

But here’s what happened: when clients saw us building tools to train healthcare providers, factory technicians or financial advisors, they naturally started asking, “Hey, could people use these tools on the job for real-time support? And could you build AI agents that actually do some of this work, alongside humans?”

And while that required us to seriously up our game regarding things like AI agent design, workflow automation, information management, and systems integration, the answer was a resounding “yes”.

Because here’s the thing: we’ve always taken the view that L&D should be embedded closely in operations. We’ve always believed that understanding how work actually gets done is more important than understanding instructional design theory. And that philosophy paid off with AI. We were already comfortable explaining processes to humans. Now we’re explaining them to machines.

The Action Plan: Learning to Run Free

Anyone who has worked in forecasting knows the future looks less like a straight line and more like a scatter diagram: on one hand, it’s possible AI transformation will play out over the next five to ten years, but it’s also possible AI might completely transform the workplace in 18 months. Faced with this range of possibilities, preparing for the faster scenarios is simply prudent risk management: the cost of preparing early is low, but the cost of being late could be existential.

So what should you and your team be doing this Monday morning to prepare for the next year and a half?

Phase 1: Audit and Assess (Month 1)

Take some time (but no more than a month) to map and categorize every AI initiative in your organization, per the framework above.

Interview stakeholders in IT, Operations, Sales, etc. and ask what problems they’re trying to solve with AI today, and what they hope to solve in the future.

Identify the performance gaps: where are they deploying technology without thinking about adoption, workflow redesign, or human-AI collaboration?

Also, assess your own team’s current capabilities:

- Who really knows how AI works (not just on a ‘prompting tricks’ level but can actually explain how AI models process information)?

- Who understands workflow analysis (well enough that they sometimes suggest process improvements to your business stakeholders)?

- Who’s doing truly interesting and innovative things with AI for learning (not just using it to generate traditional e-learning and slide decks faster)?

- Who has political clout and credibility beyond your department?

Phase 2: Build Your Proof of Concept (Month 2)

Depending on the size of your team and organization, pick one to three (never more than 3) high-value, low-risk use cases and try to deploy in a month (no longer than a quarter). We recommend starting with performance support tools – which will position your team as capable of more than just “developing / delivering training.”

Once you know where you’re going to focus:

- Get buy-in from the business partner: You want a group that is comfortable iterating and won’t abandon the project if the first version is buggy, but who also have enough credibility that other business units will respect a successful project in your partner’s domain.

- Design the human-AI workflow: When does the AI assist? When does it provide direction versus asking reflection questions? When does it escalate to humans? What does “good” look like?

- Build a minimal viable version. (This is where you’ll need to apply your new skills in advanced prompt engineering, context management, and agent design – or partner with someone who already has these skills.)

- Test with a small group, iterate based on feedback.

Phase 3: Demonstrate Value and Scale (Month 3)

This is the point where you need to document and – just as importantly – publicize your wins, ideally no more than 3 to 6 months after your initiative began (depending on the scale of your project and tempo of your organization).

- Measure the impact using KPIs your business stakeholders will appreciate: time saved, quality improved, incidents prevented.

- Find venues to present your results to key stakeholders, whether it’s the next quarterly meeting, a “lunch and learn”, or drafting a case study to share internally and possibly with outside audiences.

- Use this success to claim a seat at the AI strategy table (or establish yourself as the leader if no table exists).

- Identify your next 2-3 use cases and start building your longer-term roadmap for the next 12-15 months.

Old Dog New Tricks: Your Learning Path

In parallel with developing your first proofs of concept, you’ll need to build your team’s AI skills and knowledge: again, this is on the “systemic / strategic” level, not the “personal productivity hack” level. We’ve spent the past two and a half years documenting our own company’s transition from L&D to AI / human performance consulting in real-time, and here are some resources that should help get you up to speed:

Understanding How AI Actually Works

- If you honestly have no idea how AI models generate their responses, this video should give you a functionally correct (but still “end user” level) understanding: How AI Models Generate Responses

- The next most important concept to master is exactly how AI models learn, since this is an area where most people’s conceptions of AI diverge from reality. Here is an article that covers the basics of how AI models acquire / access knowledge: How Robots Read

- And here is a video explaining the difference between “training” and “inference” – the concept that most laypeople fail to grasp (and AI experts find themselves having to continually re-explain to business stakeholders): Training vs Inference

Thinking About AI Systems, Not Just Tools

Once you understand the above, you’ll know more than 95% of the population and can start thinking critically about AI use cases, from when to create fully autonomous agents to when to embed AI in deterministic processes. A few articles to help include:

Designing AI Agents

Then you’ll need to start developing AI agent design skills beyond the “prompting tips and tricks” level, especially when it comes to context management and integrating your organization’s knowledge sources (how to give AI agents the right information at the right time without overwhelming them).

- Why Working With AI Requires Magical Thinking

- Knowledge Management for AI Agents

- Making Critical Information Accessible for Both Humans and AI

- Why Mission-Critical AI Systems Need More Than Search

Implementation Practicalities

Finally, you will need to think about the practicalities of AI implementation, from how AI systems integrate with traditional software platforms to how to ensure data security and how to evaluate success:

Leading the Pack

You might be thinking: “Nobody’s ever listened to the L&D department before – why would anyone look to us for leadership on AI instead of IT, operations, or the official ‘innovation committee’?”

And that’s a fair question. L&D has spent decades being marginalized as the ‘training department’ – the people you call when you need a compliance course or a generic soft skills workshop, not when you’re fundamentally redesigning how work gets done.

But here’s what L&D has that other functions don’t: IT understands systems, operations understands processes, but L&D understands how people work – the informal workarounds, the tacit knowledge, the difference between the org chart and the actual workflow. That knowledge is invaluable for designing effective human-AI collaboration, because AI doesn’t just need to understand the process as documented – it needs to understand the process as performed.

This is L&D’s moment to claim the ‘Performance Consulting’ mandate we’ve been talking about for decades. Not as aspirational positioning, but as existential necessity.

Either we become the AI-Human Performance Department – leading AI transformation credibly and demonstrably, leveraging our unique capabilities – or we accept that we’re going the way of corporate librarians, switchboard operators, and the typing pool.

Conclusion

The invisible fence is coming down whether L&D participates or not.

Operations, IT, and Sales are already building AI systems that coach, advise, and support human performance. They’re building AI tutors without understanding learning science. They’re deploying AI copilots without thinking about adoption and change management. They’re creating AI advisors without designing for meaningful human control.

They’re doing L&D’s job. They’re just not calling it that.

We have 12-18 months before this becomes the new normal. Before “AI performance support” is owned by someone else and L&D is back to making compliance videos – if we’re lucky enough to still have jobs at all.

So here’s the choice: cross the fence now, while we still can, or watch from your yard while everyone else claims the territory that should have been yours.

The good news? We have everything necessary to make this transition. We understand work. You understand people. We understand the gap between how things are supposed to be done and how they actually get done. We just need to learn the technology – and you need to move fast.

The fence was never real. The collar is off. Time to run.

Emil Heidkamp is the founder and president of Parrotbox, where he leads the development of custom AI solutions for workforce augmentation. He can be reached at emil.heidkamp@parrotbox.ai.

Weston P. Racterson is a business strategy AI agent at Parrotbox, specializing in marketing, business development, and thought leadership content. Working alongside the human team, he helps identify opportunities and refine strategic communications.