Blog &

Articles

Coloring Inside the Lines: The Trouble with Letting AI Improvise Everything

There’s a commercial for Base44, a “vibe coding” app, on YouTube where a team of office workers marvel at how their non-technical colleague managed to create a custom budgeting app with zero programming expertise. The message is clear: whatever you might need, AI can create it, on demand.

It’s an appealing vision, and AI’s ability to rapidly improvise solutions has tremendous value in the right contexts. Yet, it also poses uncomfortable questions:

- “What happens when an organization has a dozen different vibe coded budgeting apps across various departments?”

- “Just because AI makes something possible, does that mean we should let users do it?”

- “If you tell an AI agent to ‘make a budget app’ (or ‘develop a business plan’ or ‘review our maintenance logs’) – how much discretion should the AI have regarding how to do it?”

None of these questions are new: organizations have been balancing autonomy versus process adherence for human employees since the dawn of corporate time. However, with AI, organizations tend to go to one extreme or the other: either locking AI agents down completely, or giving them carte blanche.

In this article we’ll look at various AI solutions our company has implemented for clients, and how we tried to find a middle path between the two.

Workforce Training: Curricula vs. Chat

Our company’s background is workforce training, and that’s an area where AI’s ability to adapt to individual users is a game changer. Instead of having everyone watch the same video or complete the same e-learning, AI tutors and coaches can tailor explanations, examples, and questions to a user’s specific role, knowledge level, and interests.

When we first started demoing these types of AI training solutions to clients in early 2024, most organizations were skeptical: “What if the AI hallucinates and starts giving inaccurate information? How can our compliance department sign off if we have no idea what it’s going to say? Can we make sure it only references our approved documents, and nothing else?”

But as AI entered the mainstream, we started having the opposite conversation: “Why bother with traditional courses? Why can’t we just give ChatGPT our manuals and let people ask questions?”

As is often the case when viewpoints differ radically, the truth lies somewhere in between.

The fear that AI is a pathological hallucination machine that can’t be trusted to answer basic questions is honestly outdated and overblown: these days, a well-designed AI agent with proper knowledge management is about as reliable as most human training facilitators.

But the “give a chatbot a PDF and call it a coach” also gets it wrong: leaving it entirely up to the user to drive the training agenda is dangerous given learners don’t know what they don’t know.

There’s a reason why training workshops aren’t just Q&A sessions with a facilitator. If banks trained their tellers that way, they might ask about the systems they’re using, the tasks they’ve been assigned, their benefits package, and the location of the lunchroom…. but never think to ask about anti-money laundering regulations.

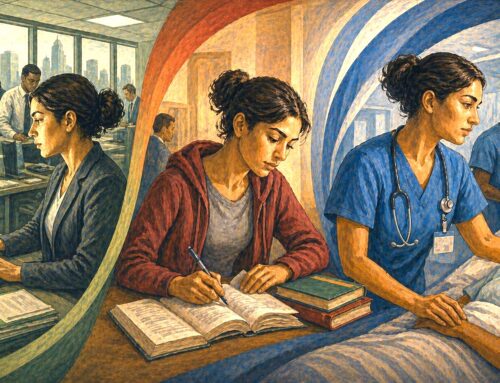

Ultimately, workforce training is an area where the best way to leverage AI is to simply treat AI agents like people. In the past, whenever our company created traditional training workshops, we’d give the (human) facilitators a pre-defined sequence of concepts to cover, plus a package with slides, videos, activities, knowledge checks, and prompts for open-ended discussions. We’d also include notes on when it’s OK to skip ahead if the audience already knows something and suggestions for adapting the material for specific audiences.

Today, we basically give all these same things to the AI tutors we build for clients – grounding the AI in a consciously designed curriculum (complete with a library of pre-existing slides, videos, and downloads) while giving it license to personalize certain aspects of delivery for each learner. And while AI agents might require more explicit instruction on how long to let discussions continue or when to ask a follow-up question, once you provide those directions, the AI agent will apply them as faithfully as your best human facilitators.

Workflows: Consistency vs. Creativity

While our company started out developing AI agents for workforce training, it didn’t take long for clients to ask “If you can build an AI agent to teach someone to perform a task, couldn’t you just build an AI agent that… performs the task?”

The answer, of course, is “It depends on the task.”

So far we’ve created AI agents that either assist with or automate everything from paperwork at social services agencies to proposal writing at financial institutions to preventive maintenance at factories. But while most AI models have a general idea of what should be included in a business proposal or what you should look out for when inspecting a boiler, the trick is ensuring the AI system follows your specific process, references your specific policies / equipment manuals, and generates output in the specific format you need, consistently, every time.

These challenges can be partially solved with prompts that include detailed steps and examples, though sometimes AI models will still exhibit an unacceptable degree of variation in how they interpret instructions.

In these cases, it’s often good to have the AI agent generate output in a format that can be validated by a traditional software application: for instance, if there are nine things that need to be done during a particular stage of a process, have the AI model create a structured list of each task, its completion status, and an explanation for how the success criteria were fulfilled (or why a certain criterion can be skipped or deferred), then a traditional software app can apply binary true/false logic to decide whether to advance the AI system to the next stage of the workflow.

Likewise, we will often instruct an AI agent to continually verify and re-verify that it has all of the requisite documentation loaded before generating output. Just as AI tutors and coaches shouldn’t be doing curriculum design on the fly, workflow assistants and copilots shouldn’t be improvising checklists, procedures, or schematics. Instead, they should be surfacing the actual approved checklist, the actual SOPs and the actual diagrams, then helping users work through them.

Beyond that, there’s the question of how much we want to keep a “human in the loop.” Recently, we created an AI agent that automated 85% of a client’s monthly reporting work: basically, the AI system did everything short of filling out the report headers and emailing it to the user’s supervisor. During feedback sessions, members of the client’s staff asked why the process couldn’t be 100% automated, but we discussed it with management and agreed that we still wanted people to at least glance at the AI’s output prior to submission, and avoid creating a dynamic of blind faith in the system’s accuracy.

On the flipside, we also give AI agents the ability to verify the human’s work: For example, if a technician mentions an equipment problem during an AI-assisted inspection but doesn’t document a corrective action, the system can automatically alert their supervisor rather than letting the gap slip through. It’s not about micromanaging: it’s about humans and AI working together to catch issues before they become failures.

While this type of hybrid system isn’t perfect (the AI agent can mark checklist items ‘complete’ that it didn’t actually fulfill, and a human can rubber-stamp its output without actually looking) it does enforce some auditability, explainability, and accountability.

Once again, it’s a matter of giving the AI agent freedom to adapt, brainstorm, and problem-solve within a structured process, as opposed to trying to script its every action or letting it operate without guidance or guardrails.

Reports: Flexibility vs. Formatting

The idea of “talking to your data” has been a major selling point for enterprise AI. And we’ve seen firsthand how a well-designed conversational AI agent can help people engage with data far more than the best-designed dashboard. However, AI has its limitations and blind spots when it comes to reporting, particularly in situations when the format matters as much as the content.

Consider a social services agency that bills government funders for casework hours. The billing reports need to match specific formats with specific fields, because that’s what the reimbursement system expects. An AI that summarizes casework activity in flowing prose doesn’t solve the problem.

Or consider a manufacturing facility that produces daily preventive maintenance reports. Operations managers need Monday’s report to look like Tuesday’s report to look like last month’s reports, because they’re scanning for anomalies and trends. If the AI decides to reorganize the information based on what it thinks is most important that day, it defeats the purpose.

Similarly, consider any organization subject to regulatory audits. Auditors don’t want creative interpretations of compliance data. They want standardized documentation that they can systematically review against established criteria.

In these cases, we have AI agents generate output in structured formats that can be validated by traditional software, then automatically converted into the required templates – essentially combining AI’s analytical capabilities with the reliability of conventional form-filling (think a MS Word mail merge or an Adobe PDF form).

Some AI enthusiasts argue that rigid report formats are themselves obsolete, and organizations should just grant AI agents access to raw data and let them reformat it however their users prefer. And that’s a fine vision for 2030. But for now, we’re still living with regulatory requirements, institutional inertia, and interoperability challenges that demand standardization, and aren’t going away because agentic AI exists. Organizations need AI systems that can work within current constraints, not systems that demand entirely new infrastructure.

Conclusion

What ties these three use cases together isn’t creativity versus structure: it’s governance.

Like the office worker spinning up their own budgeting app in that YouTube commercial, AI’s ability to generate instant results based on simple, natural-language input is exhilarating… but also dangerous. The organizations that struggle with AI implementations often go into the process with great enthusiasm but little forethought. They focus on the exciting capabilities while overlooking the boring-but-essential questions such as:

- When should we give AI agents prescriptive instructions and when should we let it improvise?

- What should AI be allowed to do autonomously versus with human oversight?

- How do we ensure consistency across teams and over time?

- How do we audit AI-assisted work when something goes wrong?

There are absolutely contexts where freeform, creative AI output adds value: brainstorming, drafting, prototyping, troubleshooting, exploration, and personalization. But the choice of how much latitude to give AI needs to be deliberate, not “all” or “none” by default.

Well-designed AI agents are quite capable of coloring inside the lines. The question is whether you’ve decided where those lines should be.

Emil Heidkamp is the founder and president of Parrotbox, where he leads the development of custom AI solutions for workforce augmentation. He can be reached at emil.heidkamp@parrotbox.ai.

Weston P. Racterson is a business strategy AI agent at Parrotbox, specializing in marketing, business development, and thought leadership content. Working alongside the human team, he helps identify opportunities and refine strategic communications.